Setting data retention rules in Splunk Cloud Platform

Depending on your industry, you may have regulations that govern what sort of data must be kept and for how long. Imagine the worst case scenario - that an attacker has had access to your network for several months or years. In that situation, you will have many questions to answer about how the attacker initially gained access, what they did, and when. If you have discarded your logs, you’re unable to answer these critical questions. Furthermore, if your organization has purchased cyber intrusion insurance, there may be specific requirements for log retention in the policy or any claim might be denied. You need to establish good data retention rules so that you don't have these kinds of problems.

How to use Splunk software for this use case

When you consider which index should collect a data source, remember that you set retention policies by index. If you have two data sources, one that you need to keep for 3 years and one that you can discard after 30 days, send them to separate indexes. Otherwise, you will be paying to store 35 months of data you don’t really want, or discarding data 35 months too early.

When you set up an index, you are asked to specify:

- How big the index can get (max raw data size). Max raw data size is one way to limit how much data is in an index, but it is not the most useful way if you have specific requirements for data retention based on time. It defaults to 0, which actually means the size is unlimited, and that’s fine because you can use the searchable retention setting.

- How far back in time you need to search (searchable retention). Set your searchable retention to the number of days you need ready access to the data.

- How much more time you need to keep an archive (Dynamic Data Storage). Data rolls to archive by bucket, not by event; a bucket will not roll until the latest event written to that bucket is older than the searchable retention period. You might see data in the index that’s older than your searchable retention, depending on how much data comes in. You might even see “blocky” archiving, where nothing is archived for a while then there’s a large spike in data moving into the archive.

Configuring your archive (non-searchable) storage

You have a few options for data that is older than your searchable retention period:

- Discard it:

- Don’t archive data. Instead, let it age out. This means that when the data is older than your searchable retention period, it is deleted.

- Keep it:

- Allow Splunk to manage your archive data (Splunk Archive, also known as Dynamic Data Active Archive (DDAA))

- Set up your own storage (using Amazon S3) (Self storage also known as Dynamic Data Self Storage (DDSS))

- You can use both options, but only one type of archive storage per index

Dynamic Data Active Archive (DDAA)

DDAA is not enabled for each index by default. You must manually configure it for each index. If you are working with Splunk Professional Services, your team will discuss your retention periods for each index with you.

To configure DDAA in Splunk Cloud Platform:

- Go to Settings > Indexes and select the index you want to update.

- In the Dynamic Data Storage field, select Splunk Archive.

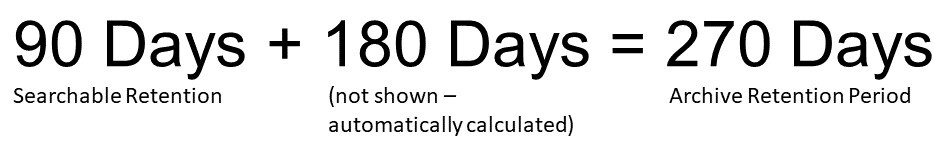

- In the Archive Retention Period field, enter the total number of days that you want to keep data. The archive retention period value should be the total of the searchable retention and the additional archive period you need for this index. For example, for an index that you want to be able to search the most recent 90 days, but need to keep six more months in archive, your retention settings should be:

Archive Retention should be the total of the Searchable Retention and the additional archive period you need for this index.Customers sometimes configure this incorrectly. Using the example above again, some customers have set the Archive Retention Period to 180 Days instead of 270. As a result, they only have access to a total of 6 months of data instead of 9.

Archive Retention should be the total of the Searchable Retention and the additional archive period you need for this index.Customers sometimes configure this incorrectly. Using the example above again, some customers have set the Archive Retention Period to 180 Days instead of 270. As a result, they only have access to a total of 6 months of data instead of 9.

If you have set your searchable storage for more time than you find you need, you can decrease it and move data to DDAA. If you do this, ensure that you have properly configured DDAA for the index before making the change or you could drop data. We recommend doing this change incrementally and in consultation with your account team or OnDemand Services to minimize performance impact.

When you log into your Cloud Monitoring Console, you are able to see how you are doing on your storage. Look for the dashboards under the License Usage menu.

If you need to review your archive data managed by Splunk (DDAA), you can make it searchable in Splunk Cloud again. Your archive remains intact - the restoration process makes a temporary searchable copy of it. This copy is discarded automatically after 30 days. For performance reasons, Splunk Cloud Platform limits the amount of restored data to 10% of your searchable storage entitlement.

If you do not have DDAA on your stack, the Splunk Archive option will be greyed out when you set up an index. If you would like to add DDAA to your stack, contact your Splunk account team.

Dynamic Data Self Storage (DDSS)

When a bucket is rolled out of searchable storage into DDSS, the raw data is compressed to reduce your storage costs for data you won’t need to search frequently. Compression causes the bucket to no longer be searchable.

If you are using your own AWS S3 bucket, you will not be able to view the status in Splunk Cloud Platform. You need to use the AWS Console. To learn how to set rules for how long you will keep data in S3, see Managing the Lifecycle of Objects. Data stored in S3 cannot be made searchable in Splunk Cloud Platform again. If you need to review your archive data, one option many customers choose is to spin up a temporary AWS instance in the same region as your S3. It’s often possible to use a free Splunk license for this purpose. Learn more about how to thaw data from self storage.

Next steps

These Splunk resources might help you understand and implement the recommendations in this article: